Design no longer needs to happen within a single screen or device. We have reached a new era in history where our bodies, cars, bedrooms, heaters, streets and just about everything can be an interface.

This article will present exciting technologies and various interfaces with new interactions, as well as take a historical perspective on the evolution of human behaviour with machines. For simplicity’s sake, I like to group human interaction with the environment and technology into 4 ages.

Google’s Project Soli

The Age Of Tools

We used primitive objects and symbols to communicate.

Humans began communicating with symbolic representations carved into any surface. Hieroglyphics were one of the initial ways that humans started communicating, and it was highly symbolic. This symbolism would later develop into art, writing, documentation and story-telling. We can even argue that we have come full circle and are using the symbols on our keyboards to communicate subtleties in communication beyond words, even if they are silly.

The tools that we used to communicate became more and more sophisticated, resulting in things still widely used such as pens.

The evolution of communication

The Age Of Machines

When hardware was the interface.

The industrial revolution placed emphasis on productivity. Welcome to the age of the machine, where we built objects at scale to make our lives simpler.

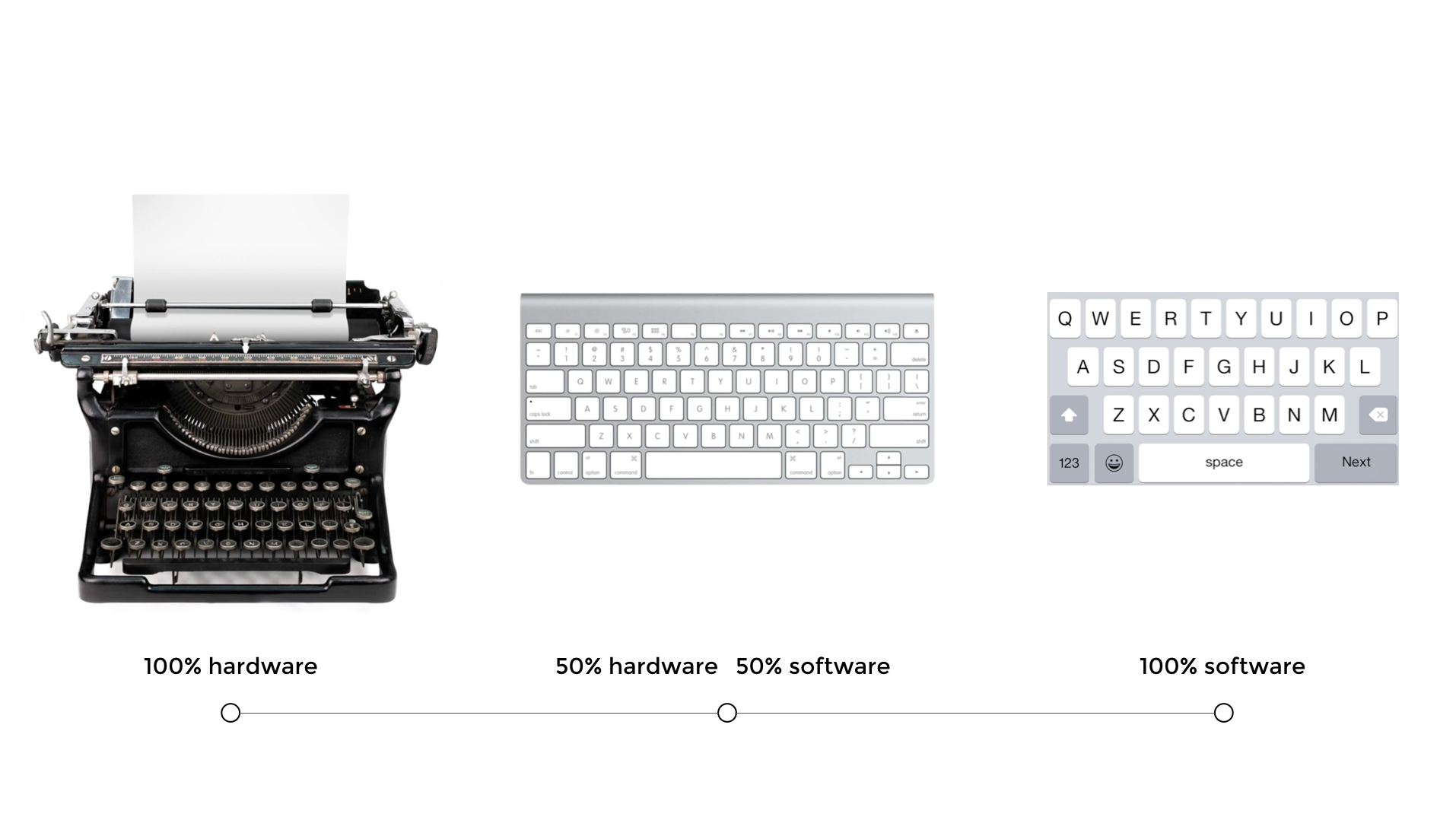

The typewriter was invented in 1868 by Christopher Latham Sholes. We begun tapping physical keys to make words, still using our fingers, instead of a pen. It helped create a consistent and effective format that could be easily adopted as well as save us time.

The drawback, however, was that we needed to learn how to type. We were mass producing machines and the power shifted to the rise of the machine. Despite designing hardware as the interface, we still had to learn how to use them.

The Age of Software

Learned skills from using hardware become metaphors to teach us how to use software.

When software needed an interface, designers looked to existing hardware and behaviour to make it easy for us to learn how to use it. For example, we looked back to the typewriter to learn how to type on a screen. The typewriter was used to inspire the keyboard to make it easier for us to know what to do. We had already learned to type, so the natural progression was to begin interacting with screens.

We see this same transition with our smartphone keypads looking like mini versions of the very same keyboards and typewriters. Adorable and useful. As we began to touch, we began to define a completely new way of interacting with our environment.

Skeuomorphism is another example of making the two dimensional screen look like a three dimensional world to help users understand how they should interact with the interface. Designers created interfaces that were already familiar by depicting things like controls of a radio or mixer in audio interfaces. Apple famously led this trend under the direction of Steve Jobs. It wasn’t until Jonathan Ive became more powerful at Apple that skeuomorphic design slowly evolved into flat design, punctuated by the release of iOS7 in 2013. We were ready to make the leap to less literal cues and could now appreciate the simplicity of a reduced interface. The current iOS Human Interface Guidelines actively encourage the shift from “Bezels, gradients, and drop shadows sometimes lead to heavier UI elements” with a “focus on the content to let the UI play a supporting role.”

Material design also shifts towards different representation of the third dimension by giving the entire canvas depth, as opposed the the individual UI elements as represented in skeuomorphism. Material design depicts the “surfaces and edges of the material provide visual cues that are grounded in reality. The use of familiar tactile attributes helps users quickly understand affordances. The fundamentals of light, surface, and movement are key to conveying how objects move, interact, and exist in space and in relation to each other.”

Touch is human-centric

On why touch worked

With the rise of the smartphone, we taught ourselves all kinds of funny gestures for the novelty and to be able to use it — and — of course because it was really cool to be able to all kinds of fun and even secret stuff with our hands. We learned the difference between a pinch and a tap and a long tap and invented more gestures than we can keep up with.

We started expanding and contracting as a way of zooming in and out. This behaviour became so natural that I have witnessed grown men try and zoom in on physical maps.

Touch works because it is intuitive. You see babies working tablet devices faster than their grandparents these days, simply because we are born to explore things with our fingers. It’s innate and reminds us of back where we started during the beginning of communication.

Touch is innate

Touch came with a price

And the user experience often suffered

We wanted to touch everything in sight and along the way, we made up some pretty obscure gestures and made it nearly impossible to find things.

That’s because we hid stuff.

We hid a lot of the main user interface features. A major part of the problem was competition between Android and iOS, where initially iOS lead the way and significantly reduced their Human Interaction Guidelines. The simplicity looked beautiful, but we were just hiding the ugly or complicated stuff for later and often made interfaces more difficult to use. Android emulated a lot of the worst things Apple implemented and it really wasn’t really until Material Design was introduced that there were even consistencies in Android design at all. The myriad of device sizes didn’t exactly help either.

We also forgot about consistency.

A swipe on iOS can mean to read an email, delete an email, archive an email, or playfully connecting with my next Tinder match, depending on the app and the context. As designers, we cling to extensive onboarding sequences just to show users what to do.

Touch only works on big screens

Now we have new devices and they have such small screens that touch becomes difficult. Designers of these devices re-introduce hardware centric features humans struggle with.

Apple watch

Even if your fingers are finer and more dextrous than mine, I still smile at the thought of poking around on our wrists.

You cannot navigate such a complex things as the internet from a hardware centric feature such as the Digital Crown. It is a real-world spin-off from known watch adjusting behaviour, but it is time consuming as well as fiddly.

The age of the self

The world is our interface

Now that the time has come, how do we design experiences and products in a world where any environment is interactive?

The next iteration partly illustrates us coming full-circle, with the Apple Pencil being a piece of technology, both hard- and software which is helping us write again, similar to where we once started: a simple tool and a surface.

It just so happens that this simple tool is a not so simple Apple Pencil and the surface happens to be a pretty advanced iPad Pro. Specifications aside, what is exciting here is that we are now getting to a point that technology is so advanced that we can “unlearn” how to use it.

The Apple Pencil is human centric because it takes 2 things that we are already familiar with: an actual pencil and an iPad, meaning that we don’t need to learn anything in order to be able to use it (unless we need a reminder of how to write with a pencil again).

How can we design products to facilitate innate behaviours, rather than design products that force us to learn new skills? How can we become more human centric in our design philosophy?

Moving beyond touch

Not only did small screens instigate designers and technologists to explore others ways of interacting with technology, new use cases and contexts inspired us to start thinking of different ways that we could use technology.

Voice commands, for example, work great while driving or cooking, but may cause a couple of stares while asking Siri where the nearest erotic massage parlour is on the train commute home.

Voice is a way that we can interact with technology around us. It can be both passive and interactive. The great thing about voice is obviously that we don’t need any hands for that — however there are limitations such as context which mean that it is not always going to be the most intuitive.Voice recognition has also not really been good enough to be trusted until very recently, but now we are at a time that voice recognition is eerily good.

Siri

Virtual reality (VR) was thrust into the mainstream with a lot of hype, supported by the purchase of Oculus Rift by Facebook in 2014. Shortly after, Google presented Google Cardboard at I/O in 2014, a low cost VR solution, a little lighter on the wallet than the $2 billion tag of Oculus, and there are more low-cost alternatives coming. Virtual reality places the user in a computer simulated three dimensional world, allowing us feel immersed in the experience and move way beyond our fingers, hands and voice. Despite allowing us to use our entire body, virtual reality is constrained by the elaborate head gear.

Influential tech figures, such as Kevin Rose, boldly announced that “Virtual Reality will turn out to be a dud,” elaborating that “consumers will always take the path of least resistance,” a similar argument can be made in terms of usability. I must agree that the novelty factor is great, but anything so interactive needs to feel intuitive. Wearing a huge mask, sometimes tethered to your desktop computer, may well not be that intuitive. We are already one step closer to removing the computer tethering on some platforms, thanks to Gameface Labs, yet still hiding behind the VR mask.

Like touching. But without touching

Project Soli is a tiny radar that can turn basically any piece of hardware into an interactive device, controlled by delicate gestures. It’s from the Advanced Technologies and Projects (ATAP Lab) at Google and has helps make the world our interface.

Now that Project Soli is open for a select group of developers to work on, the future of interaction design is limited only by our range of gestures. Project Tango is creating devices that we already use to help navigate the physical world. It combines motion tracking, depth perception and area learning to help spatially process information. Because Project Tango is completely open source, the opportunity to innovate is pretty real. There are already some unique consumer products built, including Left Field Labs’ Soundfield and Space Sketchr. Lenovo will be releasing a Lenovo Tango device, the new beginning of using our smartphones to map our worlds in three dimensional space. With a whole lot of new technology and use cases, our job as designers is to make the experiences feel truly human. My ask is that we leverage existing human behaviours and use technology as a facilitator.

Read More at Design Beyond The Screen

from Web Design Ledger http://webdesignledger.com/design-beyond-screen/

No comments:

Post a Comment